The death of design

My wife often jokes that I draw boxes for a living. She’s not entirely wrong. For the uninitiated, it humorously sums up a good portion of how I appear to have been spending my 9-5s for a long time. I would like to point out that I also draw lines between the boxes, and occasionally break up all those boxes with circles (which the twisted amongst you could say are just boxes with infinite border radii). And most importantly, I move all those boxes about into an endless array of configurations to find the best fit—that being the feng shui aspect of design.

UX and product design (to the extent that we can separate those murky-fringed disciplines) have for a long time necessitated sitting in tools like a Moleskine, Figma, and FigJam (other tools are available). We rush through countless rounds of box-building and border-drawing to visualise and make connections between ideas that, by this process of agitation, surface themselves.

And now, a small, virtually unknown company based out of some dusty San Franciscan back alley has released a handful of tools that have, as if overnight, eradicated the need for this arcane specialism.

Anthropic have achieved something very impressive with Claude, managing even to win over an old cynic like me over. ChatGPT always left an odd taste in my mouth, and Gemini leans into workspace efficiency in just the right, emotionally sensible way that I would prefer from Google, with whom I’d rather spend as little time as necessary. But sometimes, using Claude, I laugh at my desk. I wave my colleagues over to show them what I’ve made. I’m repeatedly bowled over by how effectively it interprets my prompts, progressively discloses clarification flows to keep me sufficiently and humanly looped in, and generates outputs that I find shockingly close to what I saw in my mind’s eye when prompting.

It makes me feel smarter and more skilled than I really am. And that is how Anthropic have effectively drawn so many of us to dance to their tune. There is something about the way in which Claude (Code) has swooned CEOs and surprised engineers that almost makes ChatGPT’s 2024 ascension seem minor league.

But the more I use it, the more it feels like software. Not magic. Not sparkles. It’s a tool. In the way that a great ending is both unexpected and inevitable, this feels like a natural and sensible progression. Whether this is part of a ‘paradigm shift’ that so many commentators seem to enjoy using up endless hours of YouTube oxygen to claim, I do not know. The dust is still a way off from settling. And although I recognise that humans design the tools that redesign humans, my instinct tells me that this is evolution dressed up as revolution.

So why is it that a tool that especially excels at text prediction (I recognise we’ve also been blessed with Claude ‘Design’, but I’d like to give that some time to bed in before I comment on it), and carries the word ‘Code’ in its name, appears to be throwing the design world into disarray and despair?

The death of design

“Reports of design’s death have been greatly exaggerated.”

Wise words from Mark Twain (ChatGPT, 2026).

In a recent podcast interview between Honest UX Talks’ Anfisa and Ioana, the under-discussed topic of the future of UX design came up, and Ioana wrote up some extended thinking on the topic on AI Goodies.

The piece is worth a read, particularly for the handy advice Ioana provides towards the end. However, there are some ideas that tripped me up, and are symptomatic of a broader thread in our industry discourse that I’ve found slightly uncomfortable: hat the majority of digital product tooling will increasingly be replaced by AI agents and a slew of new concepts that have quickly gained acceptance without justified consideration about whether or not they actually makes any sense.

Humans interact with hundreds, if not thousands, of human-computer interfaces every day, and we barely realise it. Every time we press a button to tell a crossing system to illuminate the light that will change to green and sound a jingle to inform us it’s safe to cross, we interface with a chain of complementary multimodal interfaces, each aspect of which was designed. I’m excited to see how AI will be deployed to help improve urban planning, design, and the smooth running of cities. But I don’t see that crossing button going anywhere!

So, do we even need interfaces anymore? In asking this question, Ioana is referring to the software interfaces we typically interact with: the dashboards and onboarding flows and widgets we click and slide and type in and tab through. Will AI agents like Claude and co do away with software as we know it?

I have two concerns with this framing: Firstly, the definition of an interface and the act of interface design extends far beyond the systematised buttons and banners we commonly refer to when we talk about ‘UI’; and secondly, the assumption that the chatbot paradigm is so superlatively effective that it can fell traditional software interfaces with one fell swoop seems… a little far-fetched to me.

This misunderstanding is compounded by the repeated use of ‘conversational’ design when referring to chatbots. Ioana suggests that “Voice and conversation are becoming primary interaction modalities”. I’d challenge the assumption that voice is becoming a primary interaction modality; I still see little evidence of it beyond asking my smart speaker to skip tracks. And as for conversation? Well, Erika Hall said it best when she wrote an entire book about how good interface design is always conversational in nature.

I may sound like I’m being needlessly picky. After all, it’s quite clear what Ioana’s getting at, especially when she brings up the idea of generative, non-deterministic UI. But these ideas matter, and the language we use to express these ideas matters. At a time when our industry is experiencing such flux and anxiety, I believe it is more important than ever that we choose our metaphors wisely.

So, what is it that digital product or UX designers actually design? When we talk about the collapse of user interfaces into the yawning void that is the chatbot input field, do we not risk belittling the value that design actually brings to the table? It was never about buttons. But for what it’s worth, there will still be buttons. Because humans love buttons.

So, what is it that digital product or UX designers actually design? When we talk about the collapse of user interfaces into the yawning void that is the chatbot input field, do we not risk belittling the value that design actually brings to the table?

Our job is one of translation. It is to communicate the conceptual model of a software system to the many human minds that will interact with that system, each mind holding its own mental model, informed by their own experience of the world. Our job is to learn how we can shape the software system in ways that provide increasing utility while making that conceptual mapping clearer.

Note the word ‘learn’ there.

In the same way that one writes in order to understand what one is writing, one designs in order to understand what one is designing. As Kaari Saarinen explains, “Working visually keeps me close to the problem and is slow enough gives [sic] me time to think while I work. Moving things around, testing relationships, and refining structure is not separate from the thinking. It is part of how clarity emerges.”

The Figma spec is proof of a process, and it is often that which has been discovered during the journey to the spec that is more valuable than the spec itself. The underappreciated value of the design crit is not the feedback received, but how a designer comes to truly understand what they have designed in the act of defending it. Design is, after all, a verb before a noun.

Conversations with computers

I worry that we have become too enamoured of the chat interface. Typing prompts and receiving walls of text in response may be one of the most ineffective and exclusionary methods of mapping the shape of a software system and gathering human input. Voice will always be problematic for several reasons—the number one being that we typically interact with software in contexts where speaking is inappropriate: public transport, the office, cafés, in noisy environments, or simply in places where we’d rather others not hear what we’re saying. That’s before we consider those with speech impediments or accents that don’t fit the mould of speech patterns upon which most models are trained.

As for that pesky notion of ‘conversational’ interfaces, otherwise known as… a text field, well, that’s all well and good if you’re a competent typist with an appropriate level of cognitive and linguistic fluency to craft a prompt. It sure is wonderful to write out full-length commands in order to perform simple tasks when your fingers are cramping up with cold, arthritis, or RSI. What too few people are talking about right now is the notion that chat interfaces, whilst perfect for certain tasks that require high technical proficiency, are for so many other instances quite possibly the weakest choice of affordance.

What too few people are talking about right now is the notion that chat interfaces, whilst perfect for certain tasks that require high technical proficiency, are for so many other instances quite possibly the weakest choice of affordance.

We should know better. The UX design community has spent the past thirty years fighting for more universally inclusive and accessible human-software interfaces. To believe that so much of that work can be rolled up into the softly bevelled maw of an agentic input field that implores us to “Ask it anything” does a disservice to the progress we’ve made.

One solution to this, as Ioana points out, is generative UI. Or ‘on-demand’ UI. Wait—hold on! You mean all those buttons and forms and sliders we just threw away? Although generative UI will certainly become a much bigger part of how humans interact with software, judging from my own experience designing an agentic UI library to support non-deterministic agent ‘conversation’ flows, there may be more work here for designers than ever before.

Agents contra mundum

Yes, it involves shaping protocols. The rules, guidelines, constraints, and evals that steer what an AI agent can do. And there is still plenty of Figma work to be done for those who, like myself, love their boxes. Agentic interfaces still sit within software ecosystems that still need menus and dropdowns and up-sells and cross-sells and notifications and banners and animations and translations and illustrations and all that jazz.

But how much software actually needs to be production-lined into agentic exchanges? I understand how easy it is to get carried away after vibe coding your first prototype or configuring your first autonomous agent to handle some daunting admin tasks, and believe that this is the future of software. But as a form of human-computer exchange, for how many tasks does this pattern really make sense? Just because an agent can generate interface elements on the fly, how many scenarios are there in which that is actually necessary?

I ask with genuine curiosity. Because it may well be that—having studied how software works for the best part of two decades—I am unable to truly escape the biases and patterns with which I see that world. And this is an interesting point that Ioana makes: “This might be one of those rare moments where junior designers can compete with senior ones by being proactive, early to AI, more creative, more willing to think differently.”

I love that idea. Because I remember feeling that way myself when I first started dabbling in web design. Everything was new to me. Unburdened by convention, I was overflowing with ideas and a will to experiment. I didn’t know the right or wrong way to do things. And that openness may well be an antidote to the sameness and mediocrity that so many in the industry fear AI usage is leading us towards. I can only hope that hiring managers start to see things this way.

Scope creep

Speaking from personal experience, one of the game-changing opportunities AI tools like Claude Code have gifted me is the ability to take the type of ideas I have been trained to pre-empt as out-of-scope, and rapidly de-risk and realise them. For example, I recently thought it would be cute to add a mini game to the loading state of a compute-heavy process that may keep users waiting anything from twenty seconds to two minutes. How could we turn a pain point into a moment of delight and brand tie-in without tripping into kitsch or cliché?

If in the past, if I had wished to design a mini game for an interstitial loading state, the weight of over a decade of scoping discussions would likely have crushed the butterfly before it had chance to flee the cocoon. Even if I’d had the temerity to mock up a design and crude Figma prototype, it surely would have been a nice-to-have, slipping into a fast follow, then further into version 2 and then, finally banished to the land of no return, the ill-fated back-burner.

You know how it goes. But thanks to a playful thirty minutes or so with Claude, accompanied by an equally playful 60 minutes or so in Figma, and a little back-and-forth between the two, I had in my digital hands a working HTML file that could, with little more work than a placeholder image, be dropped into the working product by my esteemed engineering colleagues.

This is a small example of one of the many ways generative AI is helping me extend beyond my previous capabilities, and hopefully all in the service of designing better user experiences and better software.

Here are some other examples of how LLMs have assisted me in my design process over the past year:

- I use AI in FigJam to group and identify patterns across findings from stakeholder alignment workshops, helping us get a better sense of the organisational assumptions we hold about our target customers and the opportunity space.

- I use Notion AI to suggest research questions and ideas for interview scripts, based on prior insights and project goals. I use perhaps twenty per cent of these suggestions; they’re not all that good. But seeing something written down first helps jump-start my own imagination.

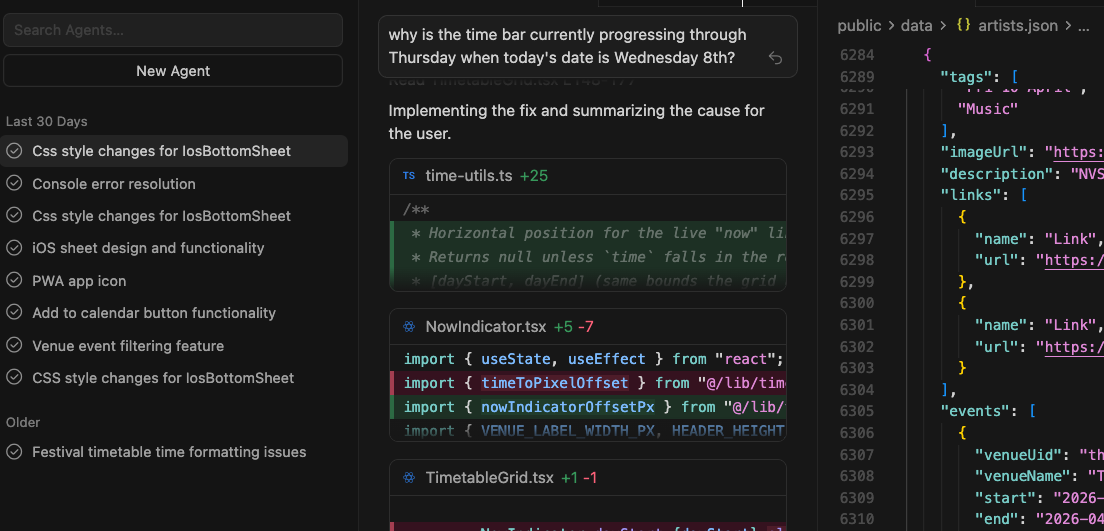

- I use Claude Code or Cursor (or sometimes jump between the two—always hitting those darn token limits!) to develop interactive prototypes to test.

- I use a combination of Gemini and Notion AI to synthesise my messy user interview notes and transcripts.

- I use Claude Cowork to help suggest the nodes for agentic conversation flows. I then mock these up using good old wireframing in Figma: this is still the best artefact for visualising the entire tree of possibilities, gathering stakeholder feedback, quickly moving nodes and elements around, and refining the flows.

- I then feed these refined wireframe flows into Claude Code to rebuild my earlier prototype, for a further round of user testing: always be continuously discovering!

I find AI a great tool for unblocking me when I can’t quite nail a particular element—especially if it is a complex interaction that, as a designer, I need to feel in a way that flat pixels would never have offered. Figma’s major flaw for me has always been the way it focuses the designer on space rather than time, and for years, it has featured a grossly underdeveloped prototyping capability that has, if I am being honest, failed designers.

But I am not asking Claude to spit out full interfaces for me. The quality is not there, and the reality is that I have not seen much evidence of genuine inspiration. The output (and output is the only real word there is for it) of LLMs, by their nature, tends to regress towards the mean. Average, tidy, and predictably banal. If you have a designer’s soul, and you have used these tools, you know damned well it’s true.

As Nick Foster laments in a recent Dezeen interview, “The apps I use to hire plumbers look and feel remarkably similar to those I use to watch skiers do backflips. Every brand feels the same, every function feels the same, every interaction feels optimised, streamlined and joyless. By any measure, these pieces of software are miracles of engineering and triumphs of logic, yet they feel profoundly underwhelming to live with.”

What designers do…

One of the most repeated arguments I’ve heard over the past six to twelve months is the idea that taste is the quintessential element that designers will continue to bring to the table. Well, I’ve written before about that mercurial quality of taste. The uncomfortable truth is that most people, when presented with the abracadabra output of an LLM, are so impressed with what it can do that they risk overlooking the question of what it should do.

If you call yourself a designer and—be honest with yourself—the bulk of your role has been the production of flat pictures of user interfaces, then I’m sorry to break it to you, but you are not designing. You are styling.

If you call yourself a designer and—be honest with yourself—the bulk of your role has been the production of flat pictures of user interfaces, then I’m sorry to break it to you, but you are not designing. You are styling.

Good designers have always been those who push back on the assumptions held within their teams, the wider organisation, or the industry at large. That responsibility, if we can nurture it, is becoming more important than ever. Perfecting the pixels of a Figma mockup was only ever the iceberg tip of the value that design can provide.

So much has changed, and yet so little has changed. In 2003, Steve Jobs explained that design is not just what it looks like and feels like; it’s how it works. Half a century before that, Charles Eames wrote that “Any time one or more things are consciously put together in a way that they can accomplish something better than they could have accomplished individually, this is an act of design.” Design is the conscious coupling of how and why, two childlike interrogatives so seemingly simple when written down, yet so seldom asked. So shallowly explored.

Blaming the tools

The best makers (like Charles and Ray Eames) are intimately familiar with their materials. AI coding tools have dramatically lowered the ‘material’ barrier for designers previously unfamiliar with code, or lacking the time and creative space to fully explore their ideas. Rather than offering a way to reduce design headcount, AI tools should be seen as just that, tools. And when wielded by skilled, creative, ethically-informed and empathic design specialists, they offer unforeseen opportunities to build demonstrably better, more human, more relevant, more accessible, and more useful design solutions, for cheaper, and for more people than ever before.

But that is only possible with teamwork and healthy collaboration, rather than the current ‘three-way standoff’ Marc Andreeson claims to be witnessing: “While the future of this stand-off remains murky, it seems clear that the industry is currently in the middle of an organizational flux. New AI tools are constantly blurring the lines between these three roles, creating new positions that blend elements of each. In the future, there will almost definitely still be need for human coding, designing, and product managing skills—but we may not define each of those jobs the same way.”

I recognise that I write leaning too much on the authority of my own experience, but was there anything previously stopping a designer from writing a PRD or learning to code, other than time and experience? It is no bad thing that boundaries between these roles blend. When every member of a product team approaches challenges more holistically, supporting colleagues rather than jostling for status and definition, we can deliver more robust and genuinely creative (push for weird) interventions.

It appears that designers are once again having to prove their worth to business leaders. We’ve been here before. The CEO’s nephew with a Lovable sub can always do it for free.

I am cautiously optimistic that as we weather this historical conjuncture, and machine intelligence loses its sparkly aura, and weekend vibe coders increasingly learn how substantial the gap is between a prototype and a product, the role of design, however it is redefined, will be just as essential as it ever was.

However, I am cautiously optimistic that as we weather this historical conjuncture, and machine intelligence loses its sparkly aura, and weekend vibe coders increasingly learn how substantial the gap is between a prototype and a product, the role of design, however it is redefined, will be just as essential as it ever was. We need to position ourselves for that. Generative AI provides the illusion of possibility but evades responsibility. Embrace these tools, but never lose critical perspective. Find their limits to better help you identify the flex and tolerance of the materials.

When the weekend demos lose their lustre and the hard problems remain, there will be plenty left to do. Not despite the machines, but thanks to them.

Dislaimer: No part of this essay was generated using AI, but I did use Grammarly and Quillbot (hiya) for grammar checking and clarity recommendations.

Further reading

- Andreesen, Marc. “Why Are Designers, Engineers, and Product Managers in a Three-Way Stand-Off?” Fast Company, https://www.fastcompany.com/91519219/why-are-designers-engineers-and-product-managers-in-a-three-way-stand-off. Accessed 28 Apr. 2026.

- “Conversational Design for You.” Mule Design, https://www.muledesign.com/blog/conversational-design-for-you. Accessed 28 Apr. 2026.

- Foster, Nick. “Nick Foster on How AI Has ‘Broken’ Software Design.” Dezeen, 3 Mar. 2026, https://www.dezeen.com/2026/03/03/software-design-ai-nick-foster-opinion/?utm_source=substack&utm_medium=email. Accessed 28 Apr. 2026.

- Ioana. “The Future of Design Jobs.” AI Goodies, https://aigoodies.beehiiv.com/p/future-of-design-jobs. Accessed 25 Apr. 2026.

- Saarinen, Kaari. “Output Isn’t Design.” Linear, https://linear.app/now/output-isn-t-design. Accessed 28 Apr. 2026.

- “AI is making CEOs delusional.” YouTube, uploaded by Mo Bitar, https://youtu.be/Q6nem-F8AG8?si=kyqAAiAQP6VzZLI6. Accessed 26 Apr. 2026.